02 — Showcase

Live Output Demos

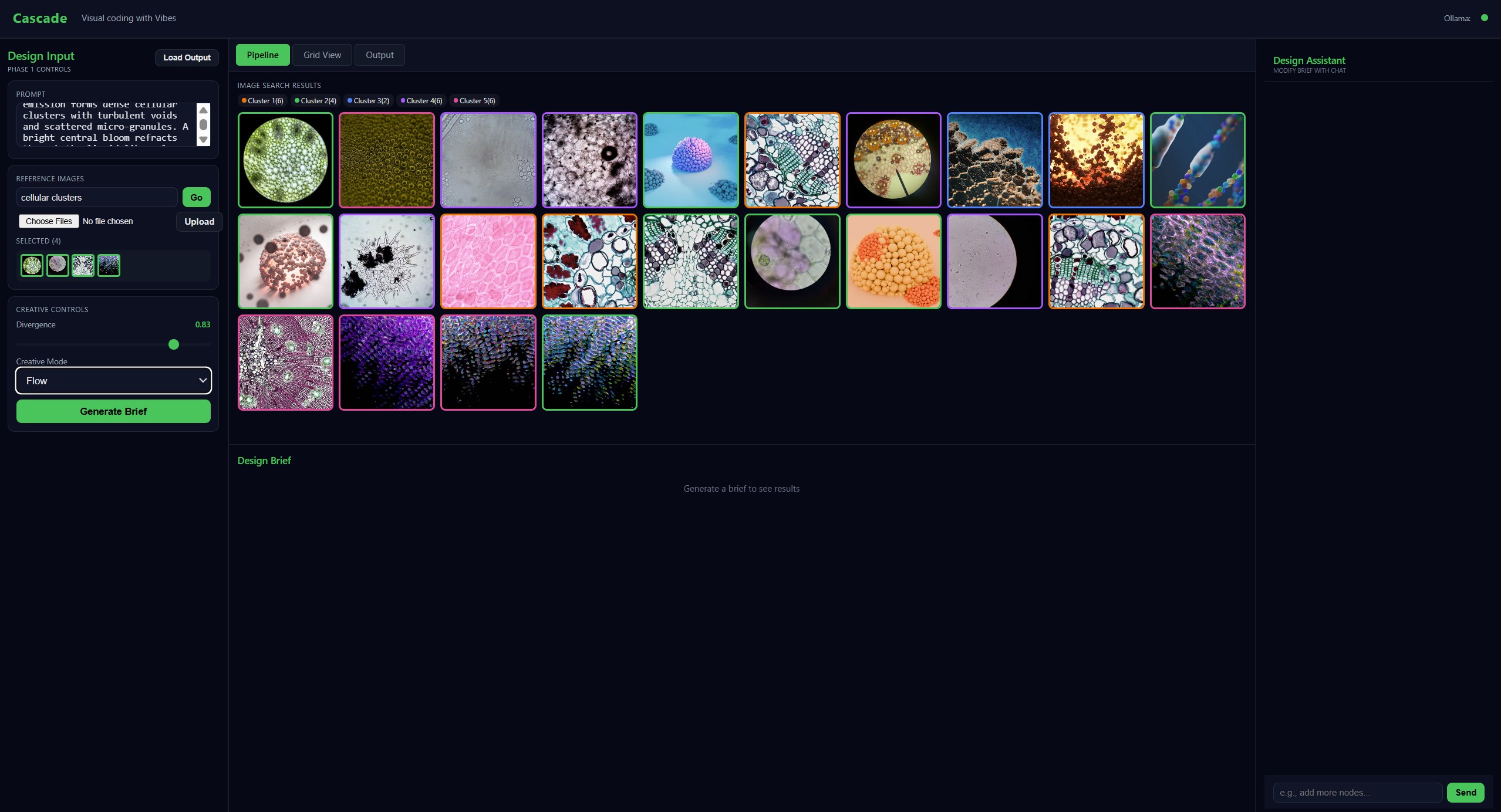

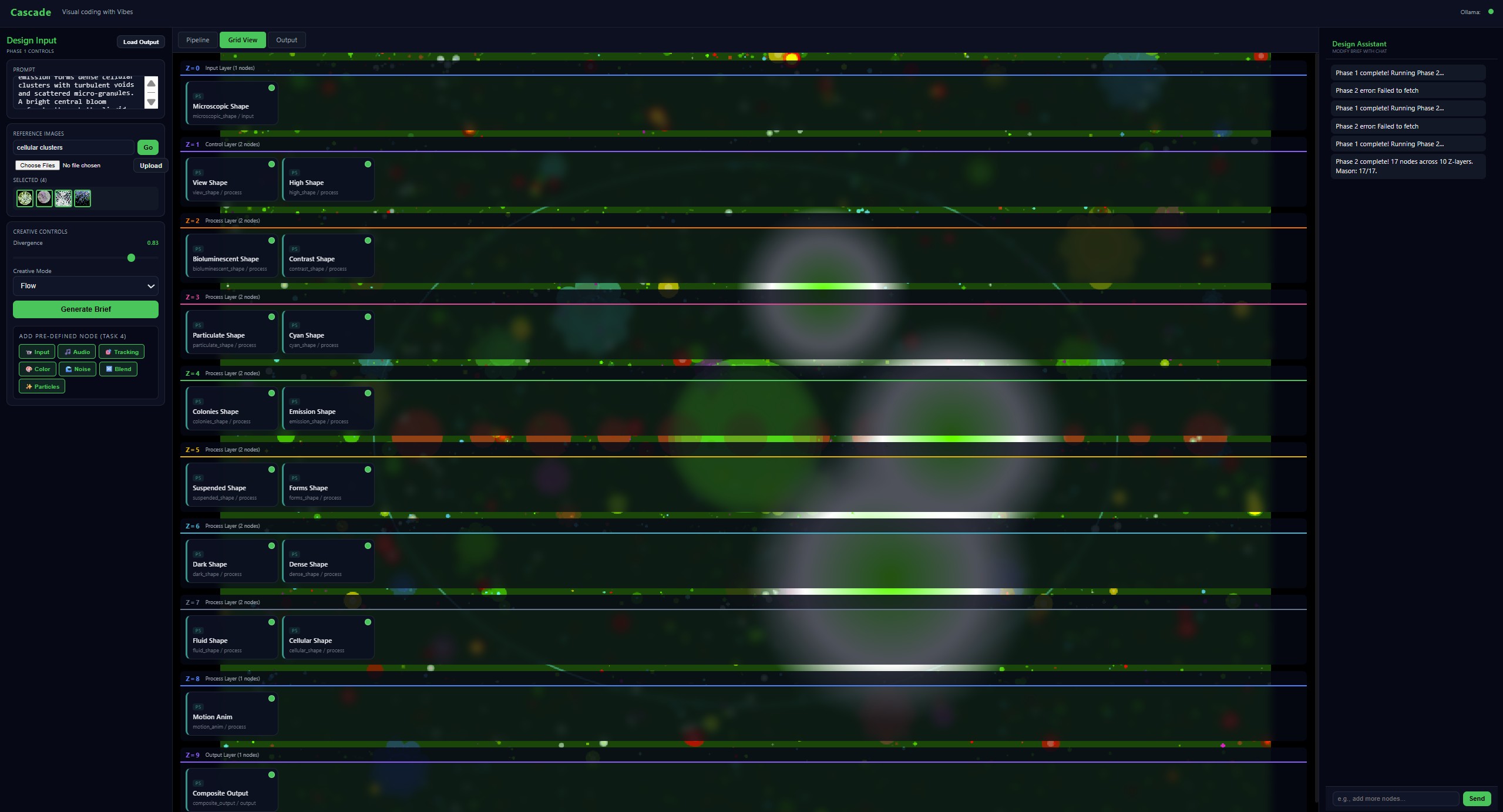

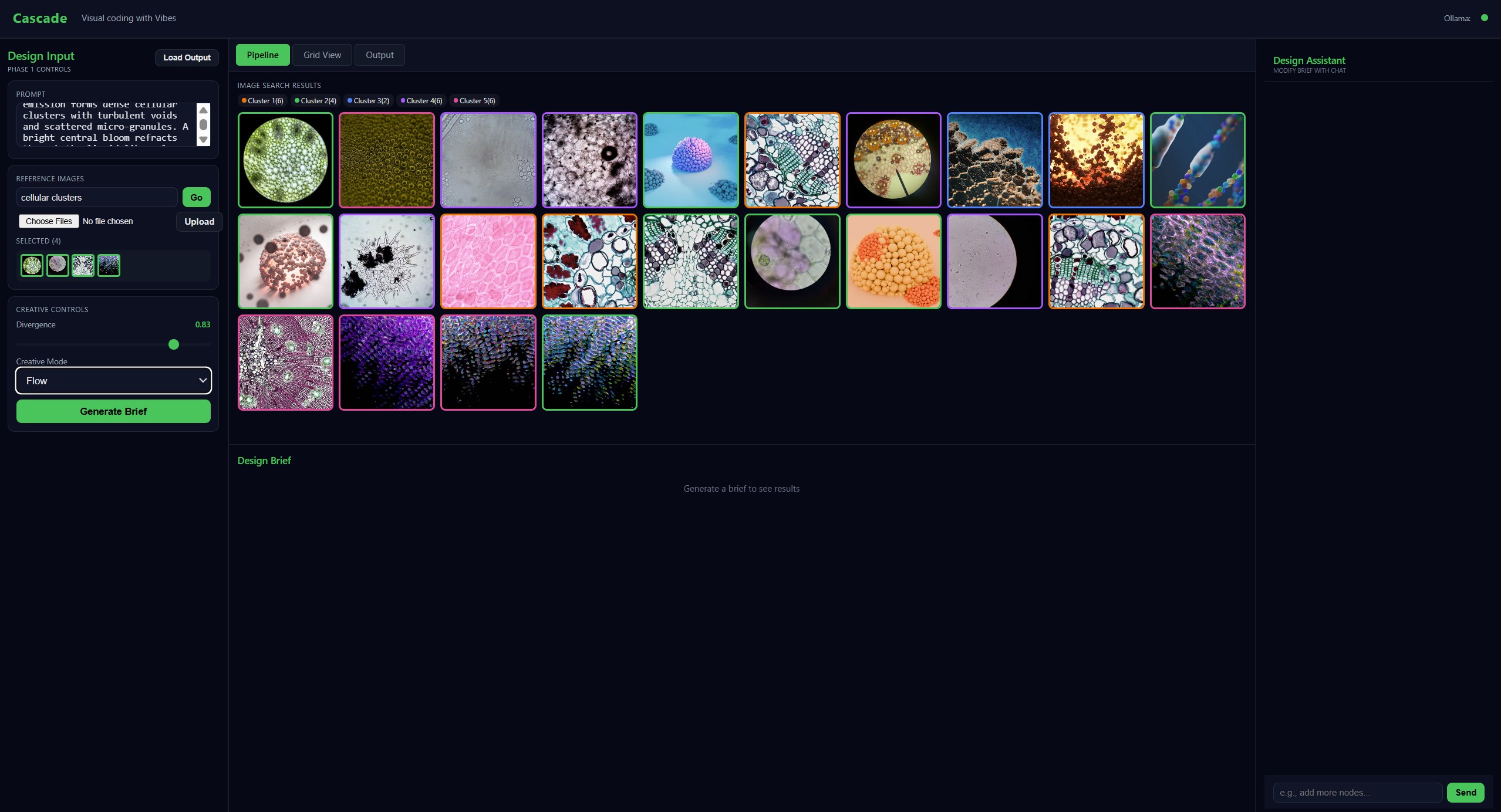

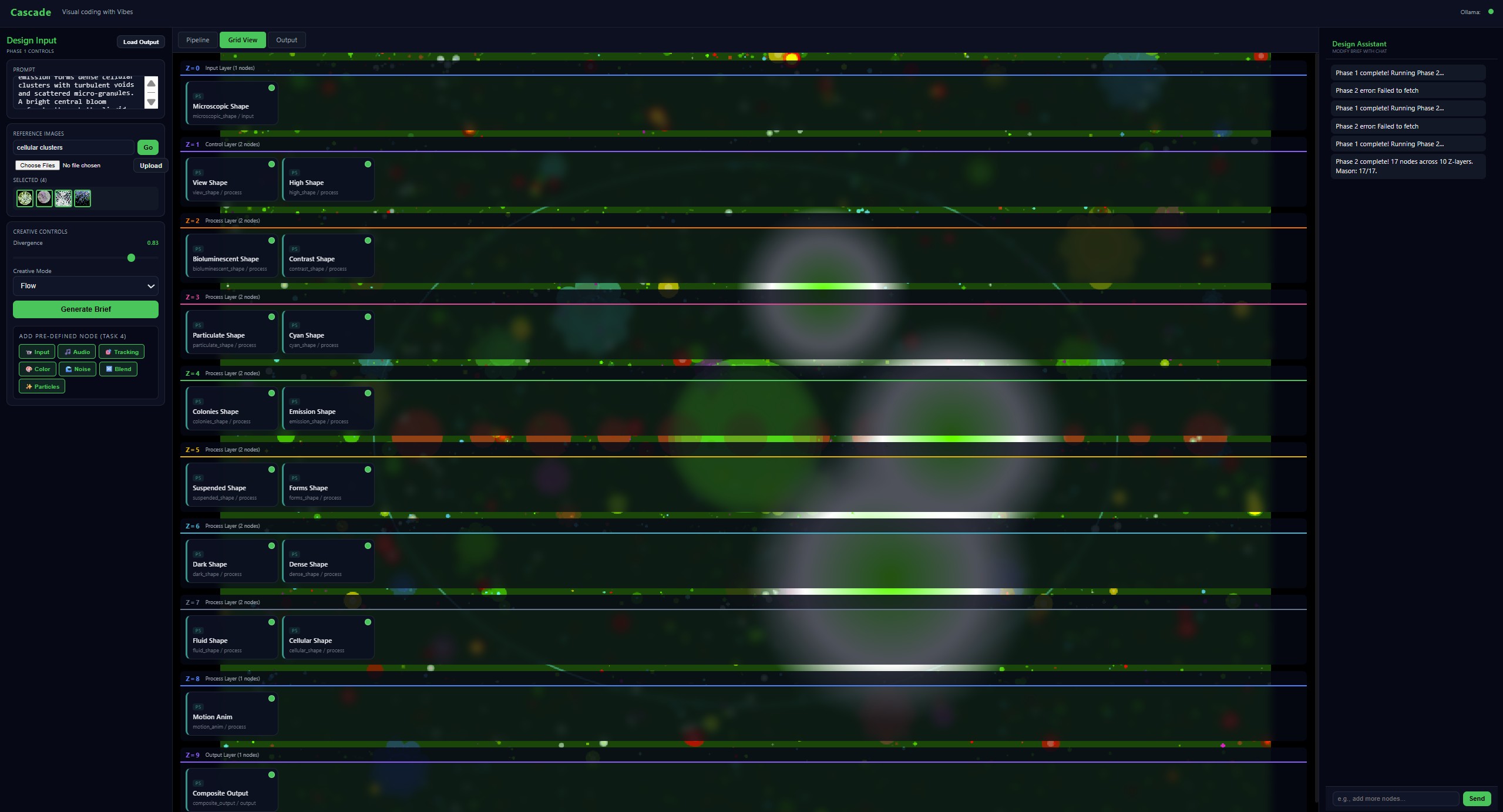

All outputs below were generated directly by Cascade from natural-language prompts — no code was written by hand. Click any thumbnail to expand.

Interface & Editor Screenshots

Prompt-Driven Generation of Visual Node Graphs in Volumetric Space

via Agentic Chain-of-Influence Synthesis

A mixed-initiative AI design assistant that transforms a natural-language brief directly into a fully executable, browser-rendered volumetric node graph — no code required, no GPU shader knowledge necessary.

01 — Overview

Professional creative visual coding tools that use node-graph environments for motion graphics, live performance, and interactive installations impose prohibitive cognitive barriers: users must master node definitions, GPU shader authoring, signal routing, and engine-specific APIs before producing any meaningful output. We present Cascade, an AI design assistant that removes this barrier by transforming a natural-language brief (and optional reference images) directly into a fully executable, browser-based volumetric compositing stack of nodes.

Rather than replacing the user's creative agency, Cascade acts as a co-designer: it interprets intent, proposes structure, generates code, and sustains a live repair loop, while the user retains full control over the resulting graph through an interactive grid editor. Cascade operates through a two-phase agentic pipeline. Phase 1 applies CLIP-based multimodal analysis to extract brand emotions, computes per-level creative divergence scores, and retrieves node archetypes via a knowledge-graph RAG system. Phase 2 runs the Chain-of-Influence pipeline: a Reasoner agent designs a typed influence graph, a deterministic Compiler instantiates a volumetric grid, and the Mason agent generates polyglot code (GLSL, p5.js, Three.js, WebAudio) for every node with headless validation. A RuntimeInspector agent closes an autonomous repair loop by routing all browser-side errors to a large language model for hot-reload patching.

02 — Showcase

All outputs below were generated directly by Cascade from natural-language prompts — no code was written by hand. Click any thumbnail to expand.

03 — Architecture

A natural-language brief flows through a semantic divergence engine and a chain-of-influence synthesiser, producing a live, repairable node graph in the browser — with zero code authored by the user.

Phase 1

Phase 2

All executors share a central WebGL2 texture hub for GPU allocation and Z-layer compositing.

04 — Contributions

A single dial D_global ∈ [0,1] propagates through the semantic model to affect graph topology, LLM sampling temperature (0.2–1.3), and parameter ranges — giving users expressive control without technical knowledge.

Mason generates and validates polyglot GPU shader, particle, and audio code per node. A Triple Filter (structural → syntax → performance) enforces BuildSheet contracts before any code runs in the browser.

The RuntimeInspector intercepts browser-side failures — GLSL precision bugs, ESM export issues, hallucinated APIs — and hot-reloads LLM-generated fixes autonomously. 97% of nodes reach approved state across 4,510 generated.

All edits, repairs, and predefined node additions write back to a canonical Session JSON automatically. Drag the file back into the editor to restore the exact design state across sessions.

A chat-based post-generation copilot lets users edit the live graph in plain language via 7 tool calls: create_node, duplicate_node, regenerate_node, update_parameters, delete_node, move_node, replace_concept.

Seven hardened, guaranteed-compile nodes (Video Input, Audio Input, Tracking, Color, Noise, Blend, Particles) provide a zero-code on-ramp for beginners to augment any generated pipeline instantly.

05 — Node Library

Seven hardened nodes ship with guaranteed-compile templates, available as one-click augmentation to any live pipeline — no prompt required.

| Node | Engine | Role | Key Parameters |

|---|---|---|---|

| Video Input | html_video | Source | Webcam / file / URL, flip |

| Audio Input | webaudio | Source | Mic / file, FFT bins, gain |

| Tracking | canvas2d | Process | Face / hand / body (ml5.js) |

| Color Node | glsl | Process | Hue, exposure, saturation |

| Noise Generator | glsl | Source | Perlin / Simplex / FBM, frequency |

| Blend Node | glsl | Process | 9 blend modes, opacity |

| Particle Node | p5 | Source | Count, shape, speed, links |

06 — Technology

Cascade generates and executes code across six polyglot engine types, all sharing a single WebGL2 compositing context.

The full Cascade system — agentic pipeline, browser runtime, node library, and session editor — is available on GitHub.

github.com/debayanmkrj/Cascadedebayanmkrj / Cascade